Robotics and Embodied AI Lab (REAL)

The Robotics and Embodied AI Lab (REAL) is a research lab in DIRO at the Université de Montréal and is also affiliated with Mila. REAL is dedicated to making generalist robots and other embodied agents.

We are always looking out for talented students to join us as full-time students / visitors. To know more, click on the link below.

Learn moreProjects

Perpetua - Multi-Hypothesis Persistence Modeling for Semi-Static Environments

An efficient method to estimate and predict feature persistence in semi-static environments using a mixture formulation that is online adapatable and robust to missing observations.

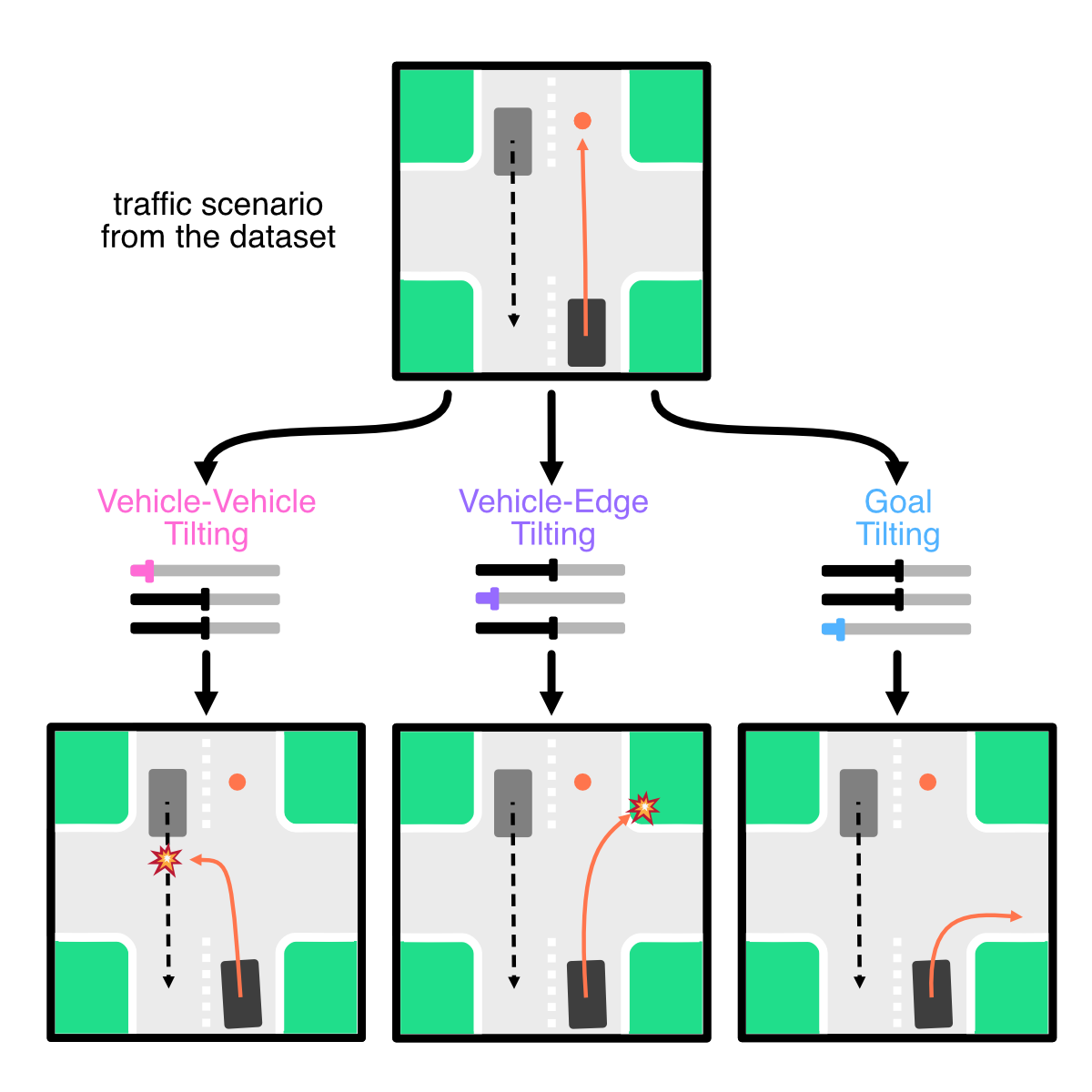

CtRL-Sim: Reactive and Controllable Driving Agents with Offline Reinforcement Learning

CtRL-Sim, a framework that leverages return-conditioned offline reinforcement learning (RL) to enable reactive, closed-loop, and controllable behaviour simulation within a physics-enhanced Nocturne environment.

Collaborators:- Roger Girgis

- Bruno Carrez

- Florian Golemo

- Felix Heide

- Chris Pal

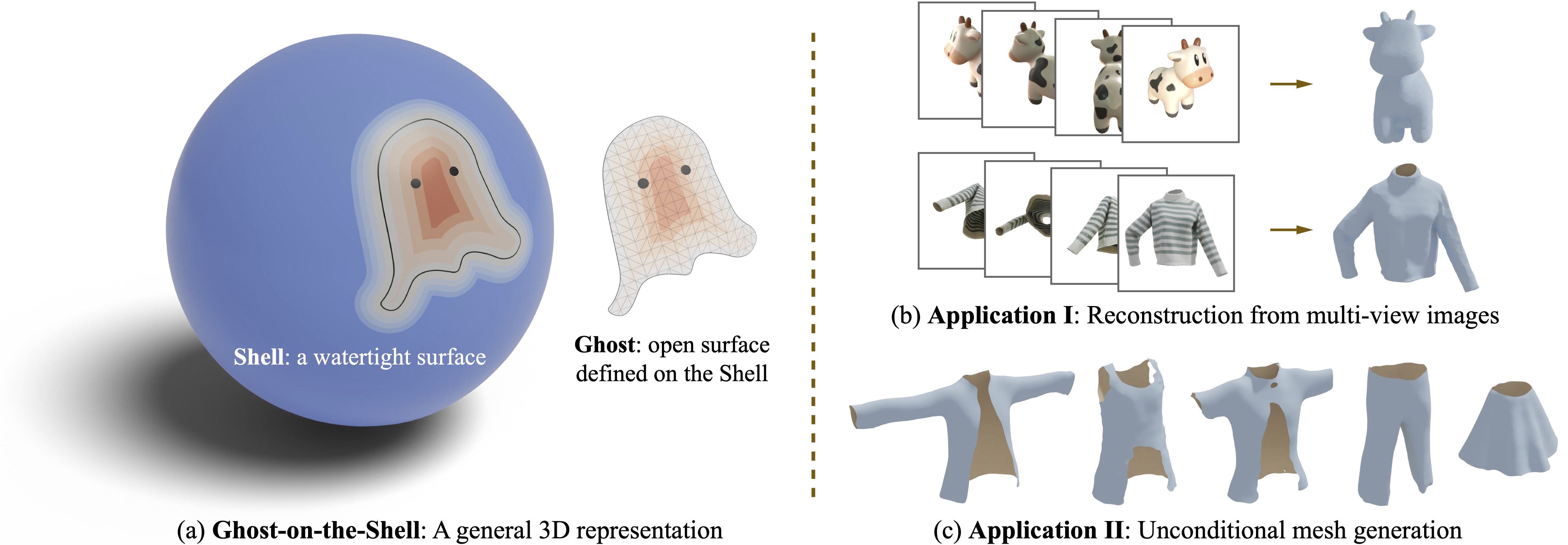

Ghost on the Shell: An Expressive Representation of General 3D Shapes

G-Shell models both watertight and non-watertight meshes of different shape topology in a differentiable way. Mesh extraction with G-Shell is stable – no need to compute MLP gradients but simply do sign checks on grid vertices.

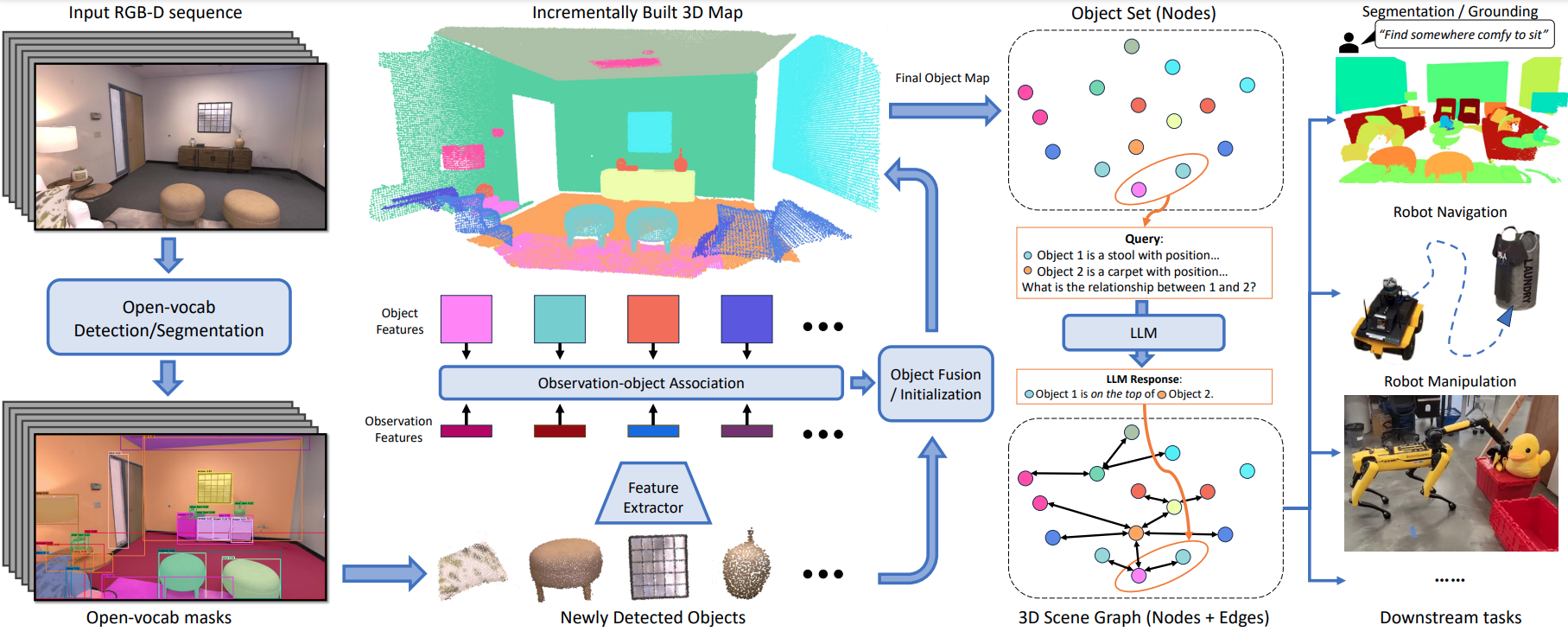

Collaborators:ConceptGraphs: Open-Vocabulary 3D Scene Graphs for Perception and Planning

ConceptGraphs builds an open-vocabular scene graph from a sequence of posed RGB-D images. Compared to our previous approach, ConceptFusion, this representation is more sparse and has a better understanding of relationship between entities and objects in the graph.

Collaborators:- Qiao Gu

- Krishna Murthy Jatavallabhula

- Corban Rivera

- William Paul

- Rama Chellappa

- Chuang Gan

- Celso Miguel de Melo

- Joshua B. Tenenbaum

- Antonio Torralba

- Florian Shkurti

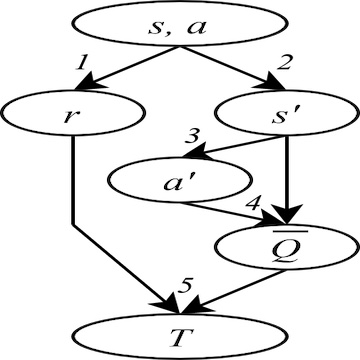

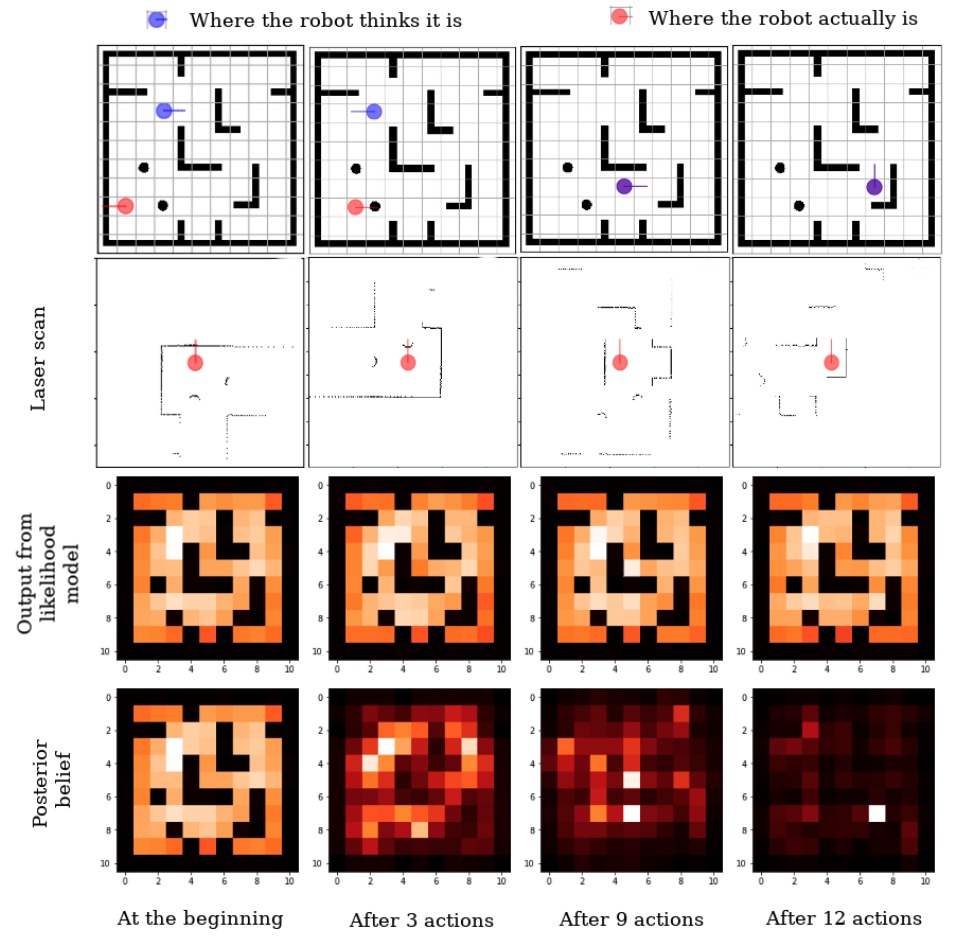

The Harmonic Exponential Filter for Nonparametric Estimation on Motion Groups

An exact approach to computing the posterior belief of the Bayes filter on a compact Lie group, based on harmonic exponential distributions and harmonic analysis. The method is exact up to the band limit of a Fourier transform and it can model multimodal distributions.

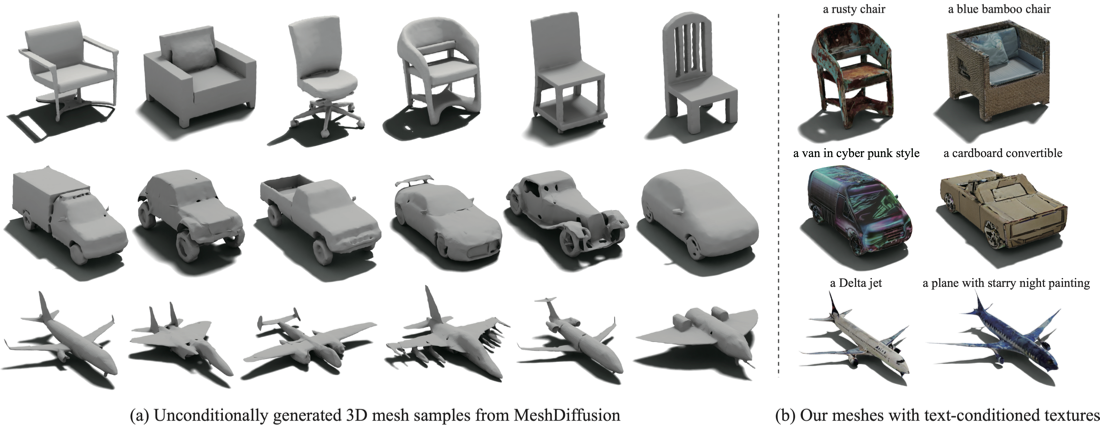

Collaborators:MeshDiffusion: Score-based Generative 3D Mesh Modeling

MeshDiffusion is the first 3D diffusion model that directly generates watertight meshes of arbitrary topology through a differentiable grid-based representation. It enables tasks such as unconditional generation and single-view reconstruction of 3D meshes.

Collaborators:- Yao Feng

- Weiyang Liu

- Derek Nowrouzerzahrai

- Michael J. Black

ConceptFusion: Open-set Multimodal 3D Mapping

ConceptFusion builds open-set 3D maps that can be queried via text, click, image, or audio. Given a series of RGB-D images, our system builds a 3D scene representation, that is inherently multimodal by leveraging foundation models such as CLIP, and therefore doesn’t require any additional training or finetuning.

Collaborators:- Krishna Murthy Jatavallabhula

- Qiao Gu

- Mohd Omama

- Tao Chen

- Alaa Maalouf

- Shuang Li

- Ganesh Iyer

- Soroush Saryazdi

- Nikhil Varma Keetha

- Ayush Tewari

- Joshua B. Tenenbaum

- Celso Miguel de Melo

- K. Madhava Krishna

- Florian Shkurti

- Antonio Torralba

One-4-All - Neural Potential Fields for Embodied Navigation

An end-to-end fully parametric method for image-goal navigation that leverages self-supervised and manifold learning to replace a topological graph with a geodesic regressor. During navigation, the geodesic regressor is used as an attractor in a potential function defined in latent space, allowing to frame navigation as a minimization problem.

f-Cal - Calibrated aleatoric uncertainty estimation from neural networks for robot perception

f-Cal is calibration method proposed to calibrate probabilistic regression networks. Typical bayesian neural networks are shown to be overconfident in their predictions. To use the predictions for downstream tasks, reliable and calibrated uncertainity estimates are critical. f-Cal is a straightforward loss function, which can be employed to train any probabilistic neural regressor, and obtain calibrated uncertainty estimates.

Collaborators:Inverse Variance Reinforcement Learning

Improving sample efficiency in deep reinforcement learning by mitigating the impacts of heteroscedastic noise in the bootstraped target using uncertainty estimation.

Lifelong Topological Visual Navigation

A learning-based topological visual navigation method with graph update strategies that improves lifelong navigation performance over time.

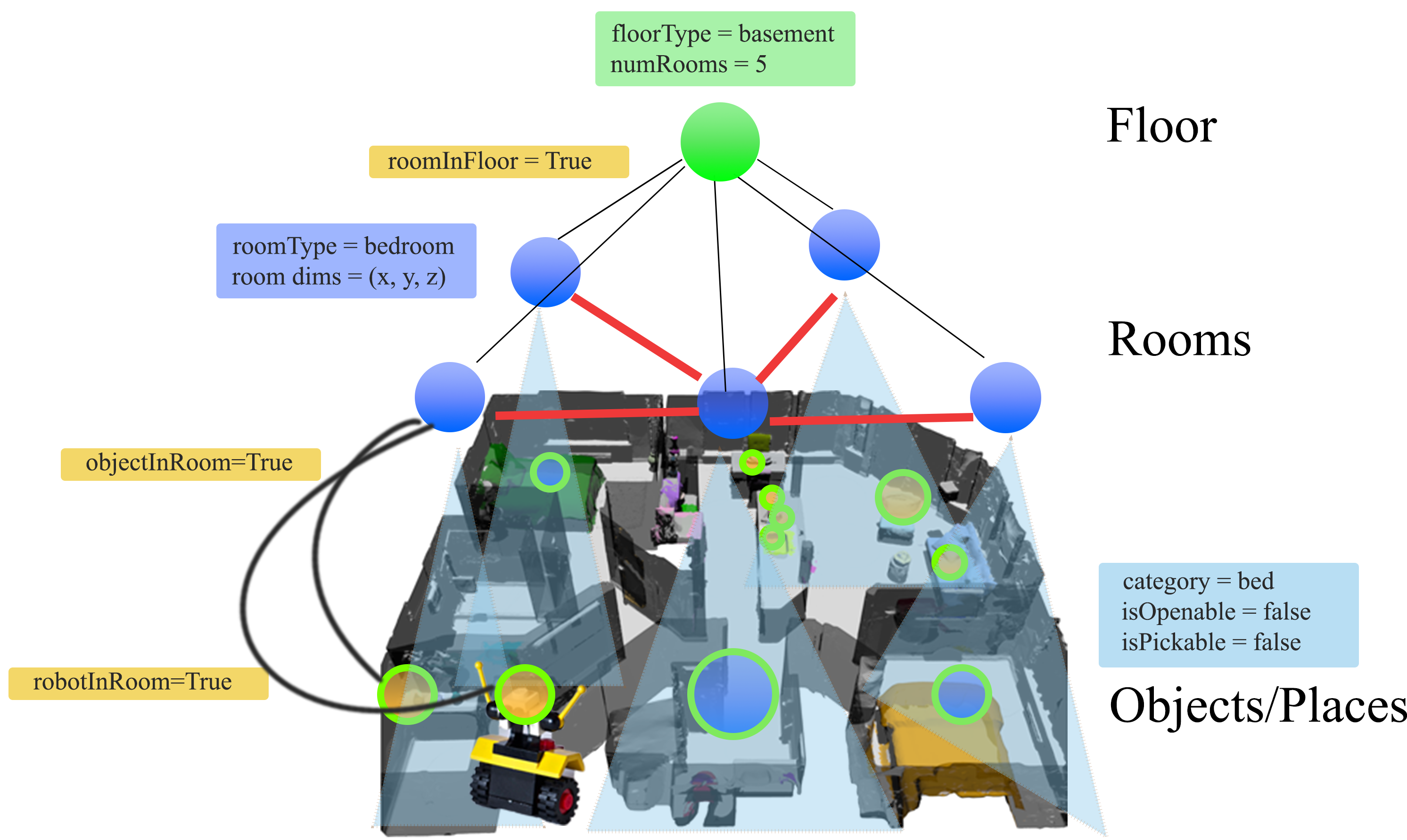

Collaborators:Taskography - Evaluating robot task planning over large 3D scene graphs

Taskography is the first large-scale robotic task planning benchmark over 3DSGs. While most benchmarking efforts in this area focus on vision-based planning, we systematically study symbolic planning, to decouple planning performance from visual representation learning.

Collaborators:∇Sim: Differentiable Simulation for System Identification and Visuomotor Control

gradSim is a framework that overcomes the dependence on 3D supervision by leveraging differentiable multiphysics simulation and differentiable rendering to jointly model the evolution of scene dynamics and image formation.

Collaborators:- Miles Macklin

- Vikram Voleti

- Linda Petrini

- Martin Weiss

- Jerome Parent-Levesque

- Kevin Xie

- Kenny Erleben

- Florian Shkurti

- Derek Nowrouzerzahrai

- Sanja Fidler

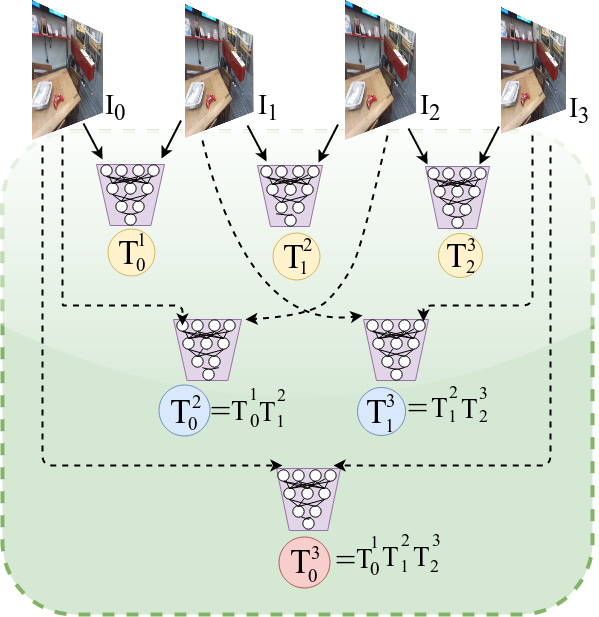

∇SLAM: Dense SLAM meets Automatic Differentiation

gradslam is an open-source framework providing differentiable building blocks for simultaneous localization and mapping (SLAM) systems. We enable the usage of dense SLAM subsystems from the comfort of PyTorch.

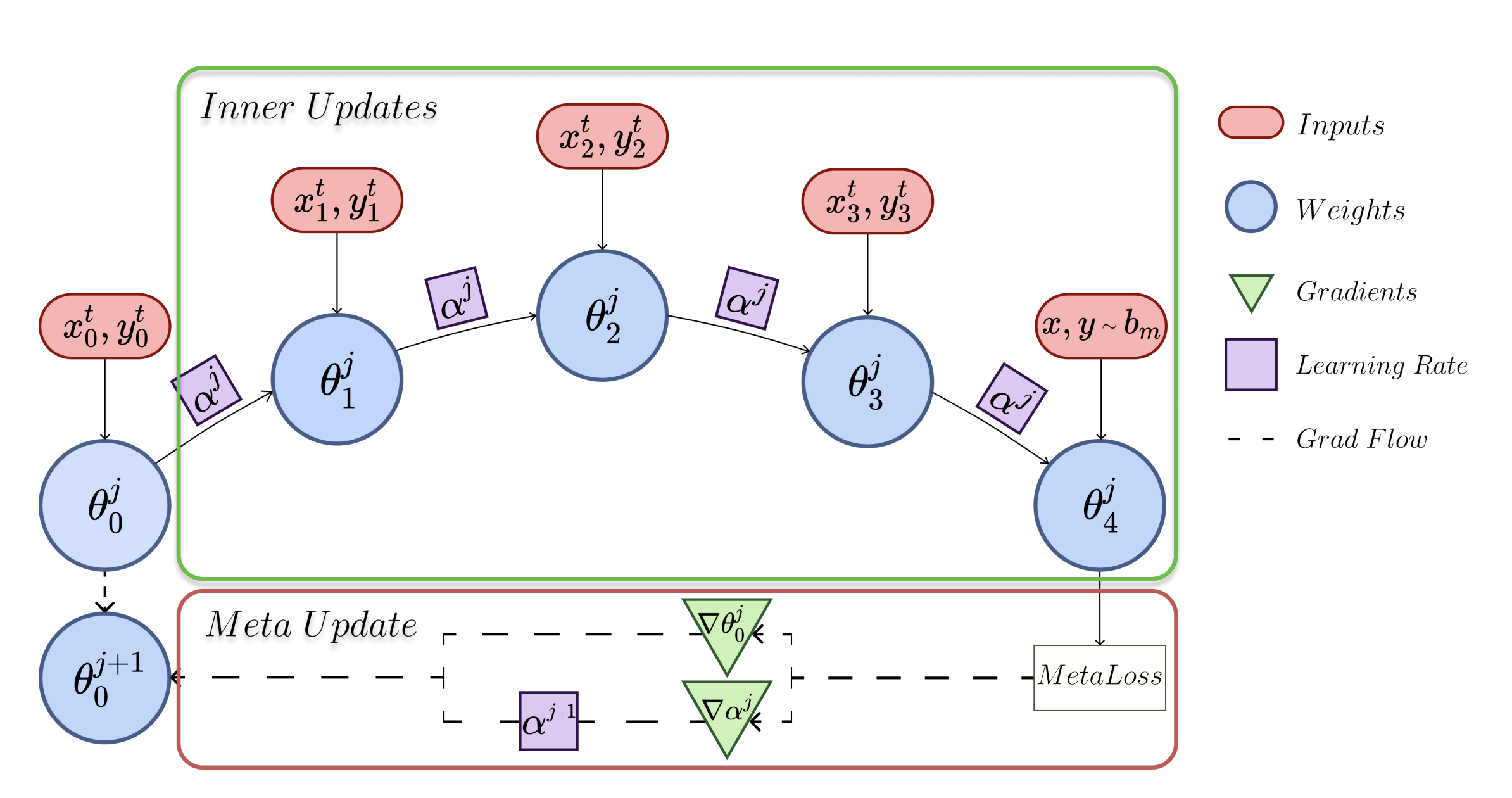

Collaborators:La-MAML: Look-ahead Meta Learning for Continual Learning

Look-ahead meta-learning for continual learning

Collaborators:- Karmesh Yadav

Active Domain Randomization

Making sim-to-real transfer more efficient

Collaborators:- Chris Pal

Geometric Consistency for Self-Supervised End-to-End Visual Odometry

A self-supervised deep network for visual odometry estimation from monocular imagery.

Collaborators:Deep Active Localization

Learned active localization, implemented on “real” robots.

Collaborators:- Keehong Seo